My notes on “sideloading” APK files to an Amazon Fire TV HD using ADB.

I don’t do this often and had forgotten how, so this may help me out next time.

Getting and using Android Debug Bridge (adb)

Useful info here:

https://developer.android.com/studio/command-line/adb

download the stable binaries for Mac, linux or Windows from here:

https://developer.android.com/studio/releases/platform-tools

you can either add the location of the binaries to your PATH, or cd to them and run them directly like I did, e.g.

./adb help

Download the APK files you want to install

For example

https://smartyoutubetv.github.io/en/

or Kodi

https://mirrors.kodi.tv/releases/android/arm/

I put the downloaded APK files in the same dir as the adb tools to keep things very simple.

Connect to your Amazon Fire TV

Find the IP address of your Amazon Fire device from Network Settings (From Settings, go to Device (or My Fire TV) > About > Network), for example mine was 192.168.0.176.

Enable ADB debugging in your Amazon Fire device via Settings.

connect from client laptop/pc to Fire TV, for example:

./adb connect 192.168.0.176:5555

you can also list local devices:

donaldsimpson@Donalds-iMac adb-tools % ./adb devices

List of devices attached

192.168.0.176:5555 unauthorized

192.168.0.59:5555 unauthorized

Install APK to connected device

Once connected, installing a new app should be as simple as

./adb install yourapp.apk

Note that if you have multiple devices you may get this message:

➜ adb-tools ./adb install smartyoutubetv_latest.apk

Performing Push Install

adb: error: failed to get feature set: more than one device/emulator

check the list of attached devices:

➜ adb-tools ./adb devices

List of devices attached

G070VM1904950F5U device

192.168.0.18:5555 device

then specify the device you are aiming for with “-s <address:port>” like this:

➜ adb-tools ./adb -s 192.168.0.18:5555 install smartyoutubetv_latest.apk

Performing Streamed InstallSuccess

I also had this response at one point:

./adb install smartyoutubetv_latest.apk

Performing Push Install

adb: error: failed to get feature set: device unauthorized.

This adb server's $ADB_VENDOR_KEYS is not set

Try 'adb kill-server' if that seems wrong.

Otherwise check for a confirmation dialog on your device.

… the last line promoted me to look at the Fire TV screen and notice it was asking me to approve the connection request from my laptop.

Doh.

Once approved the app installed no problem:

./adb install smartyoutubetv_latest.apkPerforming Push Install

smartyoutubetv_latest.apk: 1 file pushed, 0 skipped. 3.3 MB/s (7901934 bytes in 2.261s)

pkg: /data/local/tmp/smartyoutubetv_latest.apk

Success

Also, when doing this:

./adb -s 192.168.0.88:5555 install FlixVision_v2.9.2r.apk

adb: device '192.168.0.88:5555' not found

despite adb appearing to connect, the device was listed as offline:

error: device offline

this again turned out to be the FireTV having prompted me for approval on-screen, which I didn’t see when connecting from my laptop.

Note to self – check for a dialog on-screen when having issues!

Updating an existing app

I’ve had an outdated Kodi install for ages and wanted to update that while I was here. The process is simple, just add an -r for “replace existing application”:

./adb install -r kodi-18.8-Leia-armeabi-v7a.apkPerforming Push Install

kodi-18.8-Leia-armeabi-v7a.apk: 1 file pushed, 0 skipped. 3.5 MB/s (63508040 bytes in 17.391s)

pkg: /data/local/tmp/kodi-18.8-Leia-armeabi-v7a.apk

Success

This went very smoothly, all my settings, connections and shares etc were still there after the upgrade, and it looks a lot nicer for it too.

That’s it – there’s a ton of useful info on other commands and options from

./adb help

I found some more useful info on connecting from ADB to Fire TV here:

https://developer.amazon.com/docs/fire-app-builder/connecting-adb-to-fire-tv.html

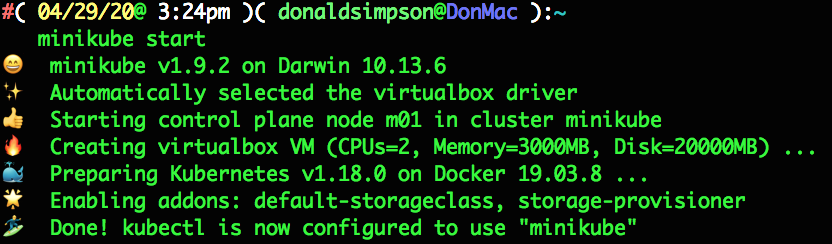

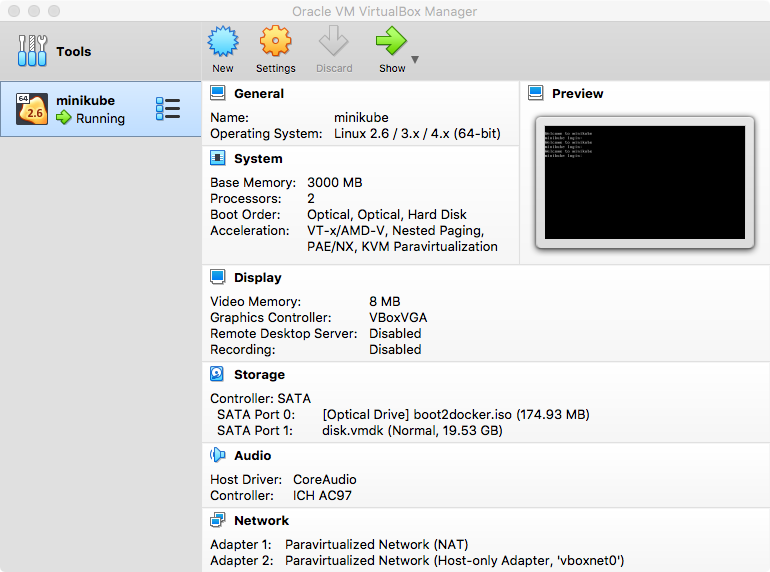

Starting up Kodi on Amazon FireTV remotely

After getting the above sorted out, I wanted to find a way to start Kodi on my FireTV without having to switch my projector on & off to do so.

I use Kodi as an AirPlay target for music during the day, and it switches itself off overnight. I could probably change that.

Using ADB tools, I connect to the device remotely, as before, with:

./adb connect 192.168.0.176:5555though normally that comes back with “already connected to…“

then start up Kodi using the “Android activity manager”, “am“:

./adb shell am start -n org.xbmc.kodi/.Splashthis takes a little while to start, but after about 30 seconds I can connect to the Kodi web interface on port 8080 of my FireTV, and the AirPlay target becomes available.

It looks like there are many other interesting things you can do with “am”.

Uninstalling packages with adb

List installed packages

./adb shell pm list packages

and filter for whatever you’re looking for (e.g. “guard“)

./adb shell pm list packages | grep -i guard

then unsinstall that package name:

./adb uninstall com.adguard.vpn

Update on smartyoutube to fix ads

Quick update specifically on Smart Youtube TV on Android. This was brought on by my initial install of Smart Youtube TV starting to show adverts (a lot).

I had installed Smart Youtube TV, version 6.17.739 (at time of writing this is still the latest stable release available) on my Android Fire – details above. This worked very well for months, but has started to not filter out youtube advertisements.

Having not found an update and while looking for another solution, I found “SmartTubeNext Beta”, which looks to be pretty stable and widely used, for a beta version:

https://www.apklinker.com/apk/liskovsoft/

From that site, it looks like around 4 months since SmartYouTube was updated, but SmartTubeNext is actively being developed, so could be worth a try – here’s how:

Get the latest smarttube beta APK (via wget, or download via browser from here: https://smartyoutubetv.github.io/)

wget https://github.com/yuliskov/SmartTubeNext/releases/download/latest/smarttube_beta.apk

connect to your Android device (update the IP to match yours):

./adb connect 192.168.0.176:5555

install the APK:

./adb -s 192.168.0.18:5555 install -r smarttube_beta.apk

All done.

I wasn’t sure if this would replace the existing SmartYouTube (which is why I added the -r switch that wasn’t necessary), but it’s ok: it’s installed as a different app so the stable version is kept and available should there be any issues with the beta version.

This version of SmartYoutube looks a lot better than the previoous/stable one.

List of improvements from their site:

- 4K support

- runs without Google Services

- designed for TV screens

- stock controller support

- external keyboard support

Personally I really like the better controller support, and the overall look is much more suitable for a large screen. It’s also a lot more customisable. And, most importantly, it removes all the adverts.